Support vector machine model for classification

Support vector machine model for classification

Beijing Institute of Technology | Ming-Jian Li

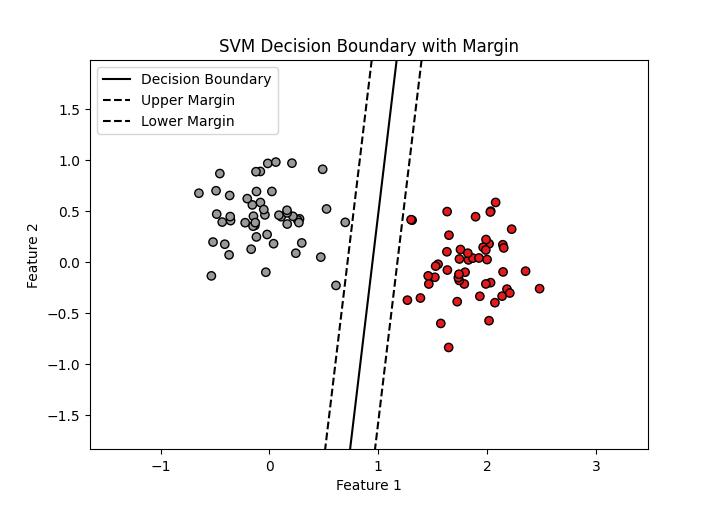

The following Python code is a companion code for the course on Artificial Intelligence and Simulation Science. It functions to establish a support vector machine classification model to classify the given data.

x

1from sklearn.svm import SVC2import numpy as np3import matplotlib.pyplot as plt4

5# generate data6np.random.seed(11)7

8mean_1 = [1.8, 0]9cov_1 = [[0.1, 0], [0, 0.1]]10mean_2 = [0, 0.4]11cov_2 = [[0.1, 0], [0, 0.1]]12

13X1 = np.random.multivariate_normal(mean_1, cov_1, 50)14y1 = np.zeros(50)15

16X2 = np.random.multivariate_normal(mean_2, cov_2, 50)17y2 = np.ones(50)18

19X = np.concatenate((X1, X2), axis=0)20y = np.concatenate((y1, y2))21

22# support vector classification23svc = SVC(kernel='linear')24svc.fit(X, y)25

26# Plot data27plt.scatter(X[:, 0], X[:, 1], c=y, cmap=plt.cm.Set1, edgecolor='k')28

29# Plot decision boundary30w = svc.coef_[0]31b = svc.intercept_[0]32x_plot = np.linspace(min(X[:, 0]) - 1, max(X[:, 0]) + 1, 10)33y_plot = -w[0] / w[1] * x_plot - b / w[1]34plt.plot(x_plot, y_plot, 'k-', label='Decision Boundary')35

36# Plot margin37margin = 1 / np.linalg.norm(w)38b_upper = b + margin39b_lower = b - margin40

41y_upper = -(w[0] * x_plot + b_upper) / w[1]42y_lower = -(w[0] * x_plot + b_lower) / w[1]43

44plt.plot(x_plot, y_upper, 'k--', label='Upper Margin')45plt.plot(x_plot, y_lower, 'k--', label='Lower Margin')46

47# Set the x and y limits for the plot48plt.xlim([min(X[:, 0]) - 1, max(X[:, 0]) + 1])49plt.ylim([min(X[:, 1]) - 1, max(X[:, 1]) + 1])50

51plt.xlabel('Feature 1')52plt.ylabel('Feature 2')53plt.legend(loc='upper left')54plt.title('SVM Decision Boundary with Margin')55plt.show()56

57X_new = np.array([[2, 0.5], [-1.0, -2.0]])58y_pred = svc.predict(X_new)59

60print("Predicted Labels:", y_pred)The result is as follows: